Deep learning has matured quickly. A few years ago, the focus was on building better models—more layers, better accuracy, bigger datasets. Today, that’s no longer the bottleneck.

The real challenge is turning those models into systems that actually work.

A model can perform well in isolation and still fail in production. It might not scale, it might break when data changes, or it might never integrate properly into business workflows. This is why companies are shifting from model thinking to system thinking.

Deep learning is no longer just about neural networks. It’s about everything around them.

In practice, many teams discover that building a strong model is only the starting point. The harder part is designing how that model fits into a larger environment—how it receives data, interacts with other systems, and continues to perform over time. This is where working with teams experienced in end-to-end delivery, such as Tensorway’s Deep Learning service, becomes relevant, especially for projects that need to move beyond experimentation into stable production systems.

The shift from model-centric to system-centric thinking

A deep learning model is only one component of a larger system.

Modern solutions include:

- Data pipelines

- Training environments

- Deployment infrastructure

- APIs and integrations

- Monitoring and governance

Instead of treating models as final outputs, companies now treat them as dynamic components that evolve within a system.

This reflects how AI is actually used in production. Systems must adapt, respond to new data, and remain stable under real conditions.

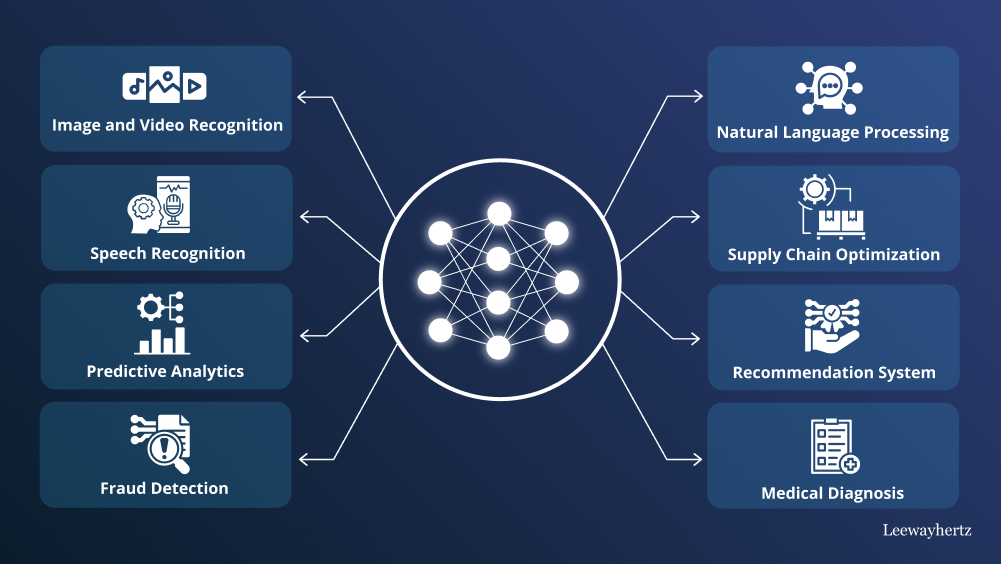

What a deep learning model actually does

At its core, a deep learning model transforms raw data into useful outputs.

It uses layers of neural networks to:

- Detect patterns

- Extract features

- Make predictions

Each layer processes data at a different level of abstraction. Early layers detect simple signals, while deeper layers identify more complex structures.

This ability to learn features automatically is what makes deep learning powerful, but it also makes it harder to manage without proper system design.

Why models alone don’t work

A model by itself doesn’t solve a business problem.

In real environments, models must handle:

- Continuous data input

- Changing conditions

- Integration constraints

- Performance expectations

Many systems fail not because the model is weak, but because:

- Data pipelines are incomplete

- Deployment is unstable

- Feedback loops are missing

The difference between a successful project and a failed one often comes down to how well the system is built around the model.

Step 1: building reliable data pipelines

Everything starts with data.

A production-ready system needs pipelines that:

- Ingest data from multiple sources

- Clean and structure it

- Update continuously

Deep learning models depend heavily on data quality. Since they learn patterns automatically, poor data leads directly to poor performance.

Unlike small experiments, enterprise systems require constant data flow, not static datasets.

Step 2: iterative model development

Model development is not a one-time task.

Teams constantly:

- Train new versions

- Test performance

- Adjust parameters

- Compare results

Deep learning models are sensitive to small changes. Even minor adjustments can significantly affect outcomes.

That’s why development must be structured and repeatable.

Step 3: infrastructure for training

Training deep learning models requires significant resources.

This includes:

- GPUs or distributed systems

- High-performance storage

- Scalable computing environments

Without proper infrastructure, training becomes slow and inefficient.

Modern systems are designed to handle large datasets and complex models without bottlenecks.

Step 4: deployment in real conditions

Deployment is where systems face real-world challenges.

This step includes:

- Serving models through APIs

- Handling real-time requests

- Scaling under load

The goal is consistency.

Systems must perform reliably even when:

- Traffic increases

- Inputs vary

- Conditions change

Many projects fail at this stage because deployment is not planned properly.

Step 5: integration with business workflows

A model becomes useful only when it connects to real processes.

This involves integrating with:

- Internal systems

- Databases

- External services

For example:

- A recommendation model connects to a website

- A fraud detection system connects to payment processing

- A chatbot connects to backend services

Without integration, models remain isolated and unused.

Step 6: orchestration of components

Modern deep learning solutions involve multiple components.

These may include:

- Data processors

- Feature extractors

- Prediction models

- Decision logic

Orchestration coordinates how these components interact.

It ensures:

- Tasks run in the right order

- Dependencies are handled

- Errors are managed

This is what turns separate tools into a working system.

Step 7: monitoring and continuous improvement

Once deployed, systems must be monitored.

This includes:

- Tracking performance

- Detecting data drift

- Logging errors

- Updating models

Deep learning systems are not static. They change as data evolves.

Without monitoring, performance gradually declines.

Common mistakes in building systems

Even strong teams make similar mistakes:

- Treating the model as the final product

- Ignoring data pipeline complexity

- Underestimating infrastructure needs

- Skipping monitoring and updates

These issues often appear after deployment, when systems are harder to fix.

How modern systems are designed

Deep learning systems in 2026 follow a few clear principles:

Modular design

Components can be updated independently.

Real-time processing

Systems handle continuous data streams.

Scalable infrastructure

Resources adjust based on demand.

Integration-first approach

Models are built to fit into workflows.

Continuous learning

Systems improve over time.

Models vs systems: the key difference

The difference is simple but important.

A model:

- Processes data

- Produces predictions

A system:

- Collects and prepares data

- Runs models

- Integrates outputs

- Adapts over time

Most failures happen when teams stop at the model.

Success comes from building the full system.

Final thoughts

Deep learning has moved beyond isolated experiments.

The real challenge is building systems that:

- Work with real data

- Integrate into operations

- Scale over time

Understanding this shift—from models to systems—is essential for any company working with AI today.

In 2026, success doesn’t come from having the most advanced model. It comes from building systems that make those models usable, reliable, and effective in real-world environments.